Understanding Googlebot byte limits is essential for website owners aiming to optimize their SEO strategies effectively. Googlebot serves as a critical component of Google’s crawling architecture, which includes various services such as Google Shopping and AdSense. When a webpage exceeds the 2MB limit, Googlebot truncates the content, potentially impacting how the page is indexed by Google’s systems. This byte limitation not only affects the way Google handles content but also underlines the importance of adhering to web crawling best practices. Optimizing your site within these parameters can significantly enhance its visibility in Google’s indexing system, ensuring your content reaches its full potential.

The byte restrictions placed on Googlebot play a pivotal role in how web content is accessed and indexed by search engines. These restrictions, which include the notable Googlebot 2MB limit, highlight the need for effective content management and structure. As a result, webmasters should focus on placing the most critical information higher in the HTML to avoid issues with visibility. Moreover, understanding the nuances of Google’s crawling architecture aids in refining one’s approach to SEO content optimization. By optimizing content delivery and adhering to web crawling best practices, sites can ensure they provide the most relevant and easily accessible information to search engine crawlers.

Understanding Googlebot’s Crawling Architecture

Googlebot, Google’s primary web crawler, operates within a centralized crawling platform shared with various services, including Google Shopping and AdSense. This architecture allows Google to streamline its crawling and indexing processes. With each client, including Googlebot, utilizing unique configurations such as user-agent strings and robots.txt directives, it becomes clearer how Google manages the vast amount of web content. As webmasters, understanding this architecture is critical, as it impacts how our sites are crawled and indexed.

This centralized system is designed for efficiency, allowing for optimized crawling while minimizing server load. When Googlebot makes requests, it follows specific protocols that ensure only the most relevant and accessible content is processed. Consequently, insights into the crawling architecture not only benefit understanding Googlebot’s behavior but also guide SEO strategies to enhance content visibility. For those involved in SEO content optimization, such knowledge is invaluable in creating content that adheres to Google’s strict crawling guidelines.

Navigating Googlebot’s 2 MB Limit for Optimal Indexing

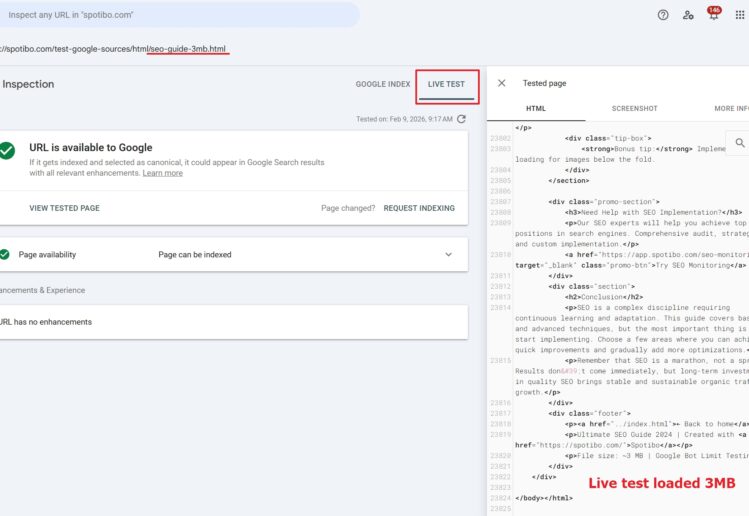

One of the pivotal aspects of Googlebot’s functionality is its 2 MB limit on the amount of data it fetches from any given page, a detail emphasized in Gary Illyes’ description of Googlebot’s operation. Any web page exceeding this threshold results in the truncation of the content, meaning that portions beyond the 2 MB limit are ignored completely, a vital consideration for web developers and SEO professionals. To comply with this guideline, it is essential to ensure that critical content, including meta tags and essential text, is prioritized within the first 2 MB of the HTML response.

To avoid falling into the trap of data truncation, webmasters should implement best practices such as moving extensive JavaScript and CSS to external files. This not only helps stay under the 2 MB limit but also enhances page load performance. Furthermore, taking heed of the recommendation to position important HTML elements higher in the document structure will ensure that they remain accessible to Googlebot’s indexing algorithms. By understanding and respecting these limits, businesses can significantly enhance their chances of successful web crawling and optimization.

Best Practices for Effective Web Crawling

Adhering to web crawling best practices is paramount for ensuring that your site is efficiently indexed by Google. This involves optimizing the structure and delivery of content to fit within Googlebot’s operational parameters, especially the 2 MB limit. Best practices typically include minimizing inline styles and scripts that can bloat the page size. By consolidating these to external files, webmasters can streamline page load times and make it easier for Googlebot to crawl essential content freely without hitting the byte cutoff.

Another crucial aspect is the organization of metadata and structured data within the page. These elements should ideally be positioned near the top of the HTML document to guarantee their fetching by Googlebot. Implementing these best practices allows webmasters to maximize their pages’ visibility and effectiveness in search results, ultimately driving more traffic to their sites. By staying informed and compliant with these strategies, businesses can better align their web presence with Google’s crawling and indexing needs.

The Importance of Googlebot’s Crawling Insights

Understanding how Googlebot operates is essential for any website owner or SEO strategist. Insights into Googlebot’s crawling process, especially regarding the 2 MB limit, provide clarity on how to effectively manage and present web content. By recognizing the limitations imposed by Googlebot and adapting the site structure accordingly, webmasters can avoid potential indexing issues that may arise from excessive content sizes or poorly organized data.

Moreover, these insights extend beyond technical compliance; they reflect the broader shifts in SEO practices. Content creators need to prioritize quality and relevance over sheer volume, focusing on delivering key messages succinctly within the limits that Googlebot imposes. As Google continues to evolve its algorithms and crawling methodologies, those who are proactive in adapting to these changes will maintain a competitive edge in the ever-changing landscape of search engine optimization.

Optimization Techniques for Improved Crawl Efficiency

To enhance crawl efficiency and ensure that your site aligns with the Googlebot’s specifications, adopting advanced SEO content optimization techniques is crucial. This includes compressing images, minifying CSS and JavaScript files, and utilizing tools for performance analysis that can pinpoint elements pushing content beyond the precious 2 MB limit. Regular audits of your website’s structure will aid in identifying and rectifying bloated code or excessive metadata that could hinder Googlebot’s ability to crawl effectively.

Furthermore, employing caching solutions and optimizing server response times are practical steps that contribute to better crawl efficiency. A faster site not only ensures a smoother experience for visitors but also enhances the likelihood of Googlebot fetching more content. By continuously optimizing your platform using these techniques, webmasters can significantly improve how their pages are indexed and ranked in search results.

Future-Proofing Against Crawling Limit Changes

Given Google’s history of evolving its crawling metrics, staying prepared for potential adjustments in the 2 MB limit is essential for future-proofing your website’s SEO. Gary Illyes noted that while this limit is currently in place, it may change as the web continues to grow and evolve. To mitigate the risks associated with a sudden shift, webmasters should maintain a flexible content strategy, ensuring that critical information is readily accessible without relying on large content blocks.

Moreover, it’s wise to regularly monitor updates from Google regarding their indexing practices and crawling architecture. By aligning your website’s design and operational strategies with Google’s recommendations, businesses can shield themselves from potential impacts of crawling limit adjustments and maintain robust visibility in search engine results. This proactive approach is key to long-term SEO success.

Leveraging Content Delivery for Efficient Crawling

The manner in which content is delivered significantly influences Googlebot’s crawling capabilities. Utilizing a robust Content Delivery Network (CDN) can expedite content loading times and improve connectivity for Googlebot as it traverses various geographic locations. A well-structured CDN will ensure that Googlebot receives the necessary resources quickly, improving the chances that all critical content remains within the 2 MB fetching limit.

In addition, optimizing the delivery of web pages through techniques such as lazy loading can facilitate a better user experience while also making it easier for Googlebot to index relevant content efficiently. By refining how content is fetched and presented, webmasters can optimize their sites not only for Google but also for users, achieving an optimal balance that promotes both usability and SEO effectiveness.

Impact of Googlebot’s Policies on Web Development

Googlebot’s policies set a standardized framework for web development, influencing how sites are designed and structured. Understanding the implications of the 2 MB limit and the overall crawling architecture encourages developers to create more efficient, clean, and user-friendly web pages. By focusing on simplicity and clarity in design, developers can ensure that their sites conform to Google’s indexing preferences, improving their chances of higher search rankings.

Furthermore, productivity tools and plugins designed to promote SEO best practices can significantly aid developers in adhering to Googlebot’s guidelines. Leveraging these resources ensures that technical elements such as UX/UI design, site speed, and mobile responsiveness align seamlessly with Google’s crawling strategies. As user expectations and search capabilities evolve, the focus on alignment with Google’s policies will remain crucial for maintaining a competitive edge.

Adapting to Googlebot’s Evolving Requirements

As digital landscapes evolve, so too does Googlebot’s crawling and indexing framework. Consequently, webmasters and content creators must remain vigilant and adaptive to Google’s latest developments. Compliance with current best practices leads not only to improved search visibility but also ensures that your content is presented effectively to users. Staying updated on changes relating to Googlebot’s byte limits and crawling protocols informs better decision-making around web strategies.

Engaging with Google’s resources, such as official documentation and updates from industry leaders, will equip webmasters with the knowledge and tools necessary to navigate these changes confidently. Building a website that exceeds expectations, both in terms of compliance with crawling limits and user experience, will ultimately lead to successful SEO outcomes as we move forward into an era of increasing online competition.

Frequently Asked Questions

What are Googlebot byte limits and how do they affect SEO content optimization?

Googlebot byte limits refer to the maximum amount of data that Googlebot can fetch from a single webpage, specifically set at 2 MB for standard HTML pages. This limit is crucial for SEO content optimization because if a page exceeds this byte limit, Googlebot will only index the content up to 2 MB, potentially omitting important information that could impact search rankings. It’s recommended to organize critical SEO elements like meta tags and structured data higher in the HTML to ensure they are included within the byte limit.

| Key Point | Details |

|---|---|

| Centralized Crawling Platform | Googlebot operates as a client of a centralized platform shared with Google Shopping, AdSense, and others. |

| 2 MB Fetch Limit | Googlebot halts fetching after 2 MB, sending truncated content for indexing, while PDF limits are 64 MB. |

| Impact of HTTP Headers | HTTP request headers count towards the 2 MB limit, affecting data retrieval. |

| Best Practices | To prevent exceeding limits, move heavy CSS/JS externally and position important tags higher in HTML. |

| Future of Limits | The 2 MB limit may evolve, influenced by the growth of web content. |

Summary

In summary, Googlebot byte limits significantly impact how content is fetched and indexed by search engines. The defined 2 MB limit ensures that essential information is captured effectively, while larger files like PDFs are managed under different constraints. As understanding of Googlebot’s capabilities expands, website owners need to adapt their practices to stay compliant with these guidelines, ensuring critical content remains accessible to crawlers. Ultimately, awareness of these byte limits can optimize a site’s visibility in search results.

Introducing Autowp, the ultimate AI content generator and AI content creator plugin for WordPress! Autowp harnesses advanced AI technology to help you effortlessly create engaging, high-quality content tailored to your audience. Whether you’re looking for blog posts, product descriptions, or marketing materials, Autowp streamlines the content creation process, saving you time and enhancing your online presence. To remove this promotional paragraph, upgrade to Autowp Premium membership.